One of the things still worrying me about Robodebt was the attention to detail.

By that, I am not referring to the crude system by which hundreds of thousands of Australians on benefits received letters between 2016 and 2019, wrongly demanding they repay Centrelink money they did not owe.

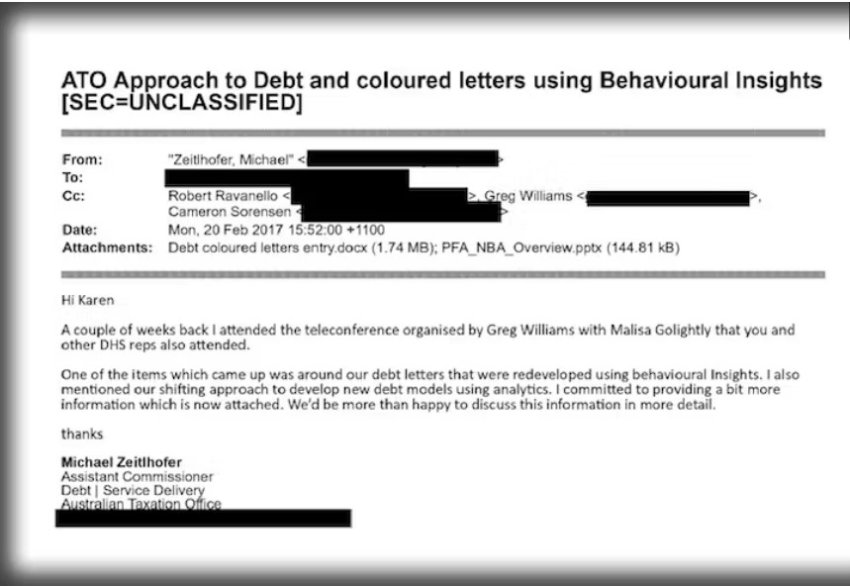

I am referring to the care with which the Robodebt letters were designed and the so-called science behind those devastating design decisions.

What Centrelink wanted was for the recipients to quietly pay up, or go online and provide years of pay slips they probably didn’t have, rather than jam up its switchboards asking questions.

The Robodebt royal commission heard that details as specific as the colours of the letters were decided on after receiving advice from “experts in behavioural science”. (In the end, Centrelink went with black and white.)

So it made what Royal Commissioner Catherine Holmes found a “conscious decision” not to include a phone number recipients could use to find out more.

That’s right, the letter didn’t include a phone number — a decision Holmes found was made “with the intention of forcing recipients to respond online”.

Where did the idea come from?

Holmes found it came from “behavioural insights”.

The human toll of powerlessness

People left with nowhere to turn and without ready access to, or familiarity with, using the internet felt powerless.

Witnesses told Holmes they wanted to end their lives. Holmes devotes an entire chapter to those who did.

Holmes found that while “behavioural insights” were sought, “no outside parties with an interest in welfare were consulted in order to understand how the scheme might actually affect people”.

Holmes wrote: "The effect on a largely disadvantaged, vulnerable population of suddenly making demands on them for payment of debts, often in the thousands of dollars, seems not to have been the subject of any behavioural insight at all."

And that’s the problem with the relatively new technocratic-sounding science of behavioural economics.

‘Choice architects’ shape policy

That Centrelink used specialists in behavioural science ought not be surprising.

A year before Robodebt began, the then prime minister Malcolm Turnbull set up what he called a Behavioural Economics Team Australia (BETA) unit in his department.

It was modelled on the so-called “nudge units” set up by former US president Barack Obama and former British prime minister David Cameron.

A “nudge” is a change destined to get someone to do something, sometimes also known by the Orwellian-sounding name “choice architecture”.

Cass Sunstein helped invent both those terms, coauthored the book Nudge, and headed Obama’s Nudge Unit. In 2015, Sunstein launched Malcolm Turnbull’s unit.

I was a fan of behavioural economics, back when Turnbull set up his nudge unit. Now, after Robodebt, I’m starting to suspect much of it is no science at all.

Hollow science

Real science examines not only cause and effect but develops a theory of the mechanism by which that effect takes place. That’s another way of saying a real science examines more than correlations.

Psychology is one such real science; economics is (usually) another.

But the more I’ve looked at it, the more often behavioural economics seems hollow: not concerning itself enough with what needs to happen for results to be achieved.

The Behavioural Economics Team Australia is still active in the Prime Minister’s office. Its website is full of dozens of projects that look useful: how to lift organ donation rates, how to make energy bills easier to understand and how to get people to take part in the census.

Yet — and I am aware of the irony — even the best-known choice architects have sometimes lacked insight into their own work.

One of the most famous findings in behavioural economics, in a 2012 paper, was that people who signed an honesty declaration at the beginning of a form, rather than the end, were less likely to lie. Two years ago the paper was retracted amid allegations the data was false.

Blind to empathy

So widespread are behavioural economics “findings” that cannot be replicated the prime minister’s BETA unit has done a podcast on the “replication crisis”.

And now, under the Albanese government, there’s another unit. This one is being set up in Treasury under the eye of Competition Minister Andrew Leigh and will be called the Australian Centre for Evaluation.

Its brief, a bit like BETA, will be to find out what works.

But if it only does that, without examining how it works, it risks being as blind to the potential costs on real people as the “behavioural insights” that shaped the Robodebt letters.

[This piece was first published in The Conversation on July 25. Peter Martin is a Visiting Fellow at Crawford School of Public Policy, Australian National University.]